And here the target data warehouse is MongoDB cloud.įinally, for loading the dataframe into mongodB cloud we have the quickest way. Load is the process of moving transformed data from a staging area into a target data warehouse. New_df = df.drop(columns=)Īfter Transformation the resultant dataset look like: STEP 3. Now the code for transforming the dataset is given below : # Transform data using pandas dataframeĭf = pd.DataFrame(table_rows,columns=["upc","title", So, we clean the data dataset using pandas dataframe.

Here suppose we don’t require fields like product class, index_id, cut in the source data set. There are some basic transformation given below:Īfter gathering the data from extraction phase, we’ll go on to the transform phase of the process. Transformation refers to the cleansing and aggregation that may need to happen to data to prepare it for analysis. Getting started with Jupyter Notebook STEP 2. You can use Jupyter Notebook to execute the above code. Print("Error while connecting to MySQL", e) Mycursor.execute("SELECT * from diamond_record") # executing the query to fetch all record from diamond record Print("You're connected to database: ", record) Print("Connected to MySQL Server version ", db_Info) # getting theĬursor.execute("select database() ") # selecting the database diamond # creating a connection to mysql database Now the code for extracting the dataset from MySQL to python is given below :Ĭonnection = nnect(host='localhost’,database='diamond’,user='root',password='****') Here we have to extract the table diamond_record from diamond database of MySQL and the source dataset is given below: We need to connect mongo, and mysql so we will import pymongo and mysql connector. We will start by importing the libraries that will be useful. Since we are working with Python and python is famous for its build in library to accomplish these tasks. Extracting the data from data source MYSQL. Let’s look at different steps involved in it. We will try to create a ETL pipeline using easy python script and take the data from mysql, do some formatting on it and then push the data to mongodb. Creating a simple ETL data pipeline using Python script from source (MYSQL) to sink (MongoDB). Here we do not use any ETL tool for creating data pipeline. The list of best Python ETL tools that can manage well set of ETL process are: Python ETL tools are fast, reliable and deliver high performance. Python ETL tools are generally ETL tools written in Python and support other python libraries for extracting, loading and transforming different types of tables of data imported from multiple data sources into data warehouses. Using the programming capabilities of python, it becomes flexible for organizations to create ETL pipelines that not only manage data but also transform it in accordance with business requirements.

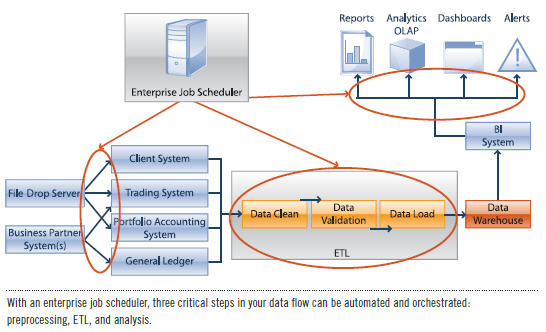

This could involve extracting, transforming and loading it onto the new infrastructure. What are ETL Tools?ĮTL tools are tools which have been developed to simplify and enhance the process of transferring raw data from a variety of systems to a data analytics warehouse. It’s a process of extracting huge amounts of data from a variety of sources and transforming the extracted data into a well organized and readable format via techniques like data aggregation and data normalization and finally at last loading it into a storage system like database, data warehouses. In this article, we tell you about ETL process, ETL tools and creating a data pipeline using simple python script.Įxtract-Transform-Load (Source: Astera) What is ETL process?ĮTL is a process in data warehousing which stands for Extract, Transform and Load. It is very easy to build a simple data pipeline as a python script. The goal is to take data which might be unstructured or difficult to use and serve a source of clean, structured data. An ETL pipeline is a fundamental type of workflow in data engineering.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed